Ready to use the cloud? So you got excited by the cloud and its advantages and are thinking about moving your workloads from on-premise to cloud. If these workloads were designed with a cloud-native approach, this move could go fairly easy. But what if they are not? How do you get there when the workloads need a re-design to optimize for the cloud? Do you re-design the whole thing so you could move everything, because perhaps the application is not capable to handle the latency between the part that stays on-premise and the new one in the cloud? Suddenly this exciting move to the cloud becomes a terrifying re-design and big bang migration.

Luckily this does not have to be: VMware Cloud on AWS can help you with the intermediate step of getting your on-premise VMware environment closer to AWS. And this is just one of the many use-cases of the VMware Cloud (VMC) on AWS solution.

What is VMC on AWS?

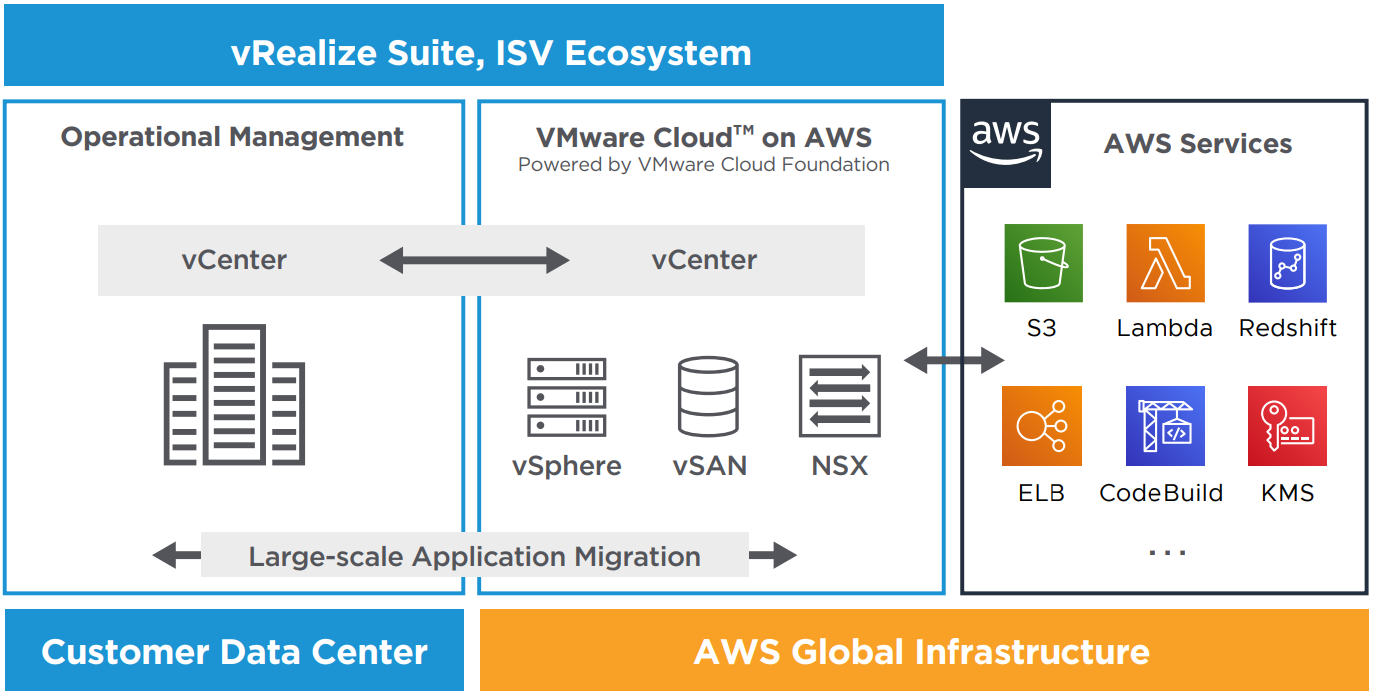

It is a VMware Software Defined Data Center (SDDC) running on top of AWS EC2 bare-metal instances. VMware sells this solution, operates it, and supports it together with its partners. The same building blocks are used as in an on-premise VMware environment. Therefore, the VMC on AWS provides the same operational consistency as an on-premise VMware SDDC.

Your on-premise environment can be linked with the VMC on AWS environment to easily go back and forth with your virtual machines. On the other side, the VMC on AWS environment is close to the rest of the AWS services and allows your virtual machines in this environment to interact with these services with low latency.

When to use VMC on AWS?

As the introduction stated, VMC on AWS can be used for migrating your VMware workloads to AWS. Scenarios where this can be applicable are simply moving to the cloud. This allows you to reduce the on-premise footprint and get you closer to native cloud services. This migration scenario can also serve as an intermediate step. Imagine your current contract with your datacenter provider is expiring, but the new location is not yet decided. In that case, VMC on AWS can provide you with the temporary infrastructure to keep everything running with minimal setup effort.

VMware Cloud on AWS – Usecases

But what if your on-premise environment runs just fine but you need extra capacity for some time? You can extend to VMC on AWS and run your dev/test workloads or extra on-demand workloads during peak loads. The use of NSX allows for extending your L2 networks so you don’t even need to change your IP addresses. This can be a solution for when capacity planning was wrong and the necessary hardware is not in-place to cope with the higher-than-expected demand.

Similar to extending your datacenter, you could also use the solution as a second datacenter for when things go bad in your primary. You can run a minimal cluster that has enough storage in combination with VMware SRM and use this environment as a disaster recovery site. During a disaster, you could scale out your VMC on AWS cluster in minutes if there would be insufficient CPU or memory. This scenario in combination with the previous one, where you would extend your datacenter and run your dev/test environment on VMC on AWS, can be a very powerful one. You can still decide whether you power off your dev/test environment during a disaster, but you do not really have to since hosts are added within minutes if necessary.

I have mentioned the dev/test workloads several times already, and when building applications this flexibility of adding and removing hosts can be very handy. It prevents having idle resources in-between projects by scaling down the amount of hosts where possible.

How to use VMC on AWS?

You can already start with VMC on AWS with just one host. The Single Host SDDC is a starter configuration limited to 30 days. This offers a lower-cost entry point for customers. The Single Host SDDC should be upgraded to a 3-host SDDC to retain the data. Otherwise, after 30 days, the single host environment is deleted. In terms of support, you get the same services from VMware and the partner as for a 3-host SDDC. However, no SLA is given for the Single Host SDDC as there is no redundancy for component or host failure and you may lose your data.

A production cluster has a minimum of 3 hosts. This allows you to choose RAID 1 for your virtual machines, with a “failures to tolerate” of 1. If you want RAID 5 or RAID 6 for your virtual machines, VMware requires you to scale to 4 or 5 hosts respectively. In general, the more hosts in your cluster, the better optimized your storage will be. Moreover, if you need a VMC on AWS environment that can survive a complete Availability Zone (AZ) failure, then you can choose for Multi-AZ deployment during setup. This will deploy a stretched cluster over two AZ’s with a vSAN witness in another AZ. Each AZ will require a minimum of 3 hosts so this brings the minimum number of hosts in a Multi-AZ deployment to 6 hosts.

VMware Cloud on AWS – Multi-AZ deployment

When setting up your SDDC, you can choose between two host types, either a i3.metal or r5.metal. The main difference between the two is the storage architecture. The former has 8 local NVMe hard disks and offers better performance but a fixed compute/storage ratio, while the latter has EBS-backed storage (Elastic Block Storage). This allows storage to range from 15 TiB to 35 TiB per host and has the advantage that when a host fails, the EBS volumes can be re-attached to the new host, and thus reducing the rebuild time. Other differences between the two host types are CPU and memory. The r5.metal has slightly higher specifications. However, the main criteria for choosing between the two will mostly be storage related.

| i3.metal | r5.metal | |

|---|---|---|

| Logical processors | 72 | 96 |

| Physical cores | 36 | 48 |

| Memory (GiB) | 512 | 768 |

| Network performance (Gbps) | 25 | 25 |

| Storage | 8 x 1.9 NVMe SSD | EBS volumes |

Additional to the operational consistency to an on-premise VMware SDDC, the VMC on AWS SDDC has some neat features to increase flexibility. You can add/remove hosts to/from your cluster manually within minutes, but you can also configure Elastic DRS. This feature allows for automatic scale up or down by using the resource management features from vSphere. When a certain capacity threshold is reached, eDRS will add a host to your cluster. It would take around 10-15 minutes to add a host to an existing cluster. This will add both compute (CPU/memory) and storage capacity. eDRS will also scale down your cluster when it is lightly loaded. The process of adding a host to the cluster, either manual or using eDRS, is depicted below:

VMware Cloud on AWS - Automatic Cluster Configuration: adding a host

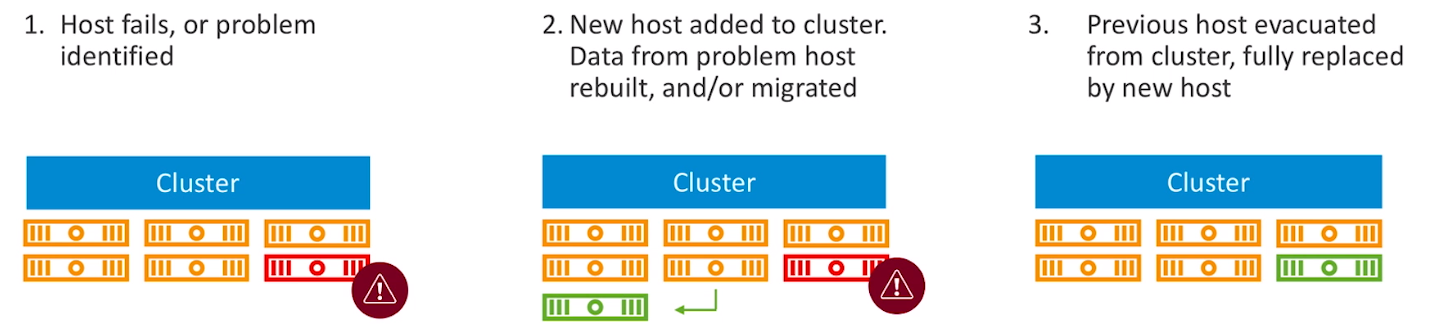

Furthermore, VMC on AWS can react quickly when a component or host fails. It will insert a new server into your cluster and vSphere HA will restart the VM's which were running on the failed host. In the meantime, the data from the failed host is rebuilt or moved to the new host. The failed host is removed from the cluster when this process is done, resulting in a healthy cluster with still the same amount of hosts as before the component or host failure occurred.

VMware Cloud on AWS - Self Healing: recovering from a host failure

As VMware manages this environment for you in terms of infrastructure, they use this flexibility as well during patching of your hosts. At the beginning of the patch window, they insert an extra host to your cluster without any additional cost. This allows them to patch all the hosts while you still have all resources available, even if one of your regular hosts is in maintenance mode.

How to leverage AWS services?

So your workload is now running on VMC on AWS, but how do you connect to AWS services? During set up of your SDDC, you connect to an AWS account and select a VPC and subnet. The setup will deploy several Elastic Network Interfaces (ENI) to your VPC, of which one will be active. These ENI's have a maximum throughput of 25Gbps. You can then connect to AWS services privately using this VPC connectivity. You could set up VPC endpoints for connecting to public AWS services without going over the internet.